(This is a guest post by xorhash.)

Introduction

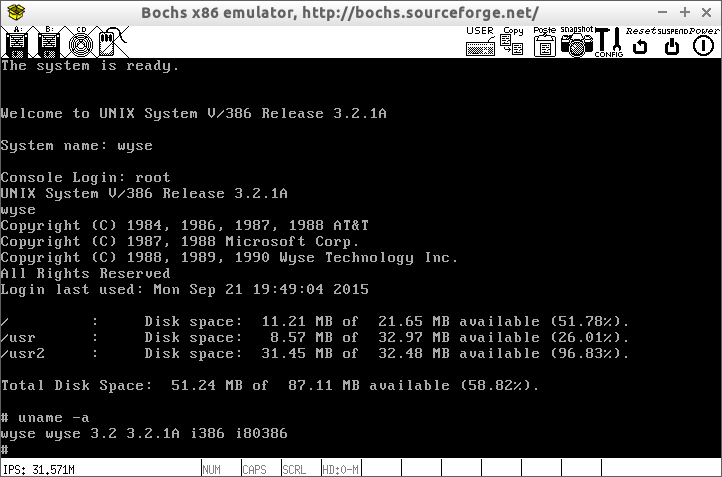

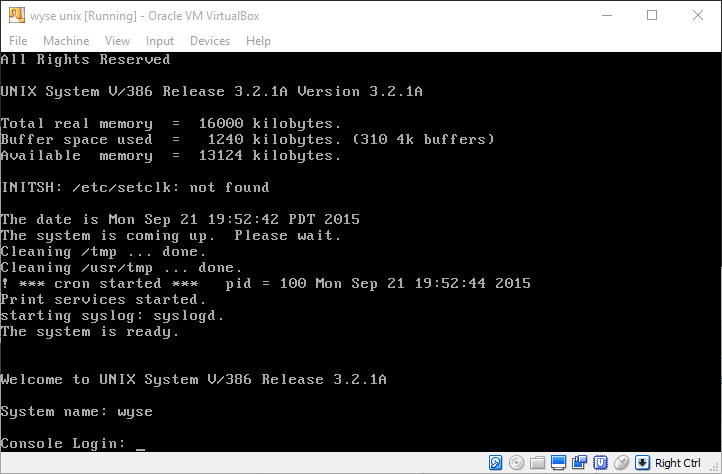

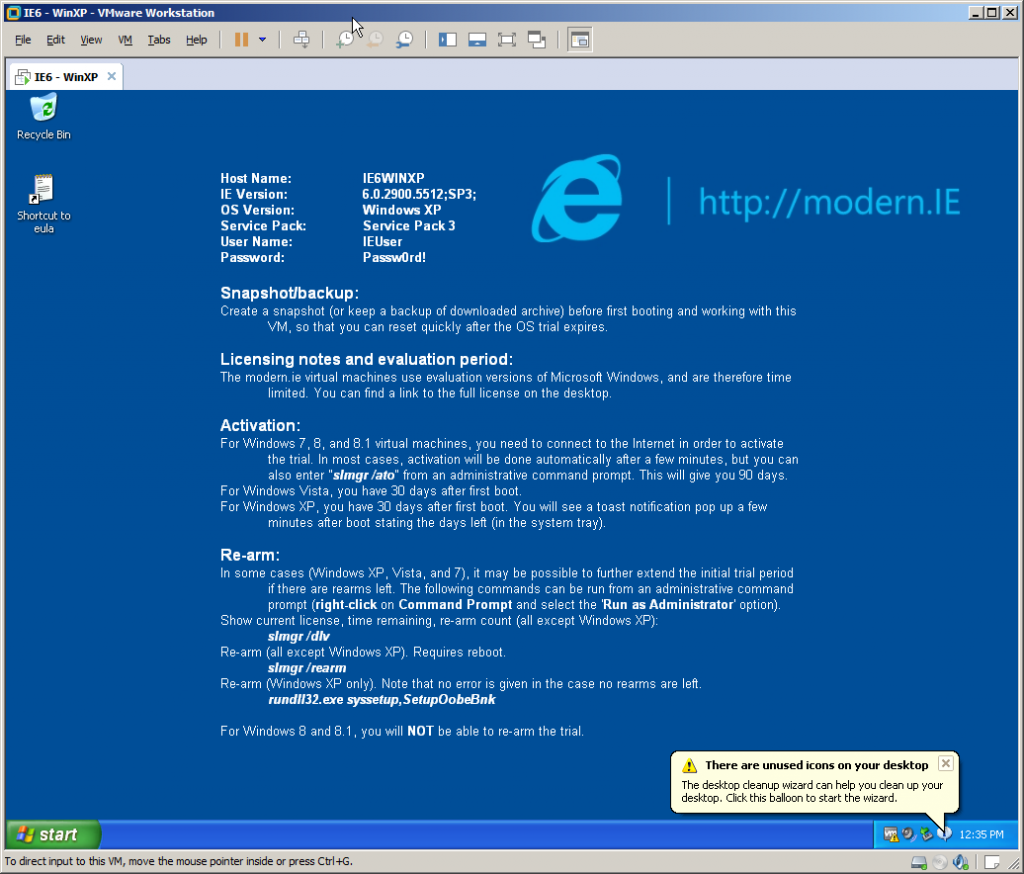

I have been messing with the UNIX®† operating system, Seventh Edition (commonly known as UNIX V7 or just V7) for a while now. V7 dates from 1979, so it’s about 40 years old at this point. The last post was on V7/x86, but since I’ve run into various issues with it, I moved on to a proper installation of V7 on SIMH. The Internet has some really good resources on installing V7 in SIMH. Thus, I set out on my own journey on installing and using V7 a while ago, but that was remarkably uneventful.

One convenience that I have been dearly missing since the switch from V7/x86 is a functioning backspace key. There seem to be multiple different definitions of backspace:

BS, as in ASCII character 8 (010, 0x08, also represented as^H), andDEL, as in ASCII character 127 (0177, 0x7F, also represented as^?).

V7 does not accept either for input by default. Instead, # is used as the erase character and @ is used as the kill character. These defaults have been there since UNIX V1. In fact, they have been “there” since Multics, where they got chosen seemingly arbitrarily. The erase character erases the character before it. The kill character kills (deletes) the whole line. For example, “ba##gooo#d” would be interpreted as “good” and “bad line@good line” would be interpreted as “good line”.

There is some debate on whether BS or DEL is the correct character for terminals to send when the user presses the backspace key. However, most programs have settled on DEL today. tmux forces DEL, even if the terminal emulator sends BS, so simply changing my terminal to send BS was not an option. The change from the defaults outlined here to today’s modern-day defaults occurred between 4.1BSD and 4.2BSD. enf on Hacker News has written a nice overview of the various conventions.

Changing the Defaults

These defaults can be overridden, however. Any character can be set as erase or kill character using stty(1). It accepts the caret notation, so that ^U stands for ctrl-u. Today’s defaults (and my goal) are:

| Function | Character |

|---|---|

| erase | DEL (^?) |

| kill | ^U |

I wanted to change the defaults. Fortunately, stty(1) allows changing them. The caret notation represents ctrl as ^. The effect of holding ctrl and typing a character is bitwise-ANDing it with 037 (0x1F) as implemented in /usr/src/cmd/stty.c and mentioned in the stty(1) man page, so the notation as understood by stty(1) for ^? is broken for DEL: ASCII ? bitwise-AND 037 is US (unit separator), so ^? requires special handling. stty(1) in V7 does not know about this special case. Because of this, a separate program – or a change to stty(1) – is required to call stty(2) and change the erase character to DEL. Changing the kill character was easy enough, however:

$ stty kill '^U'

So I wrote that program and found out that DEL still didn’t work as expected, though ^U did. # stopped working as erase character, so something certainly did change. This is because in V7, DEL is the interrupt character. Today, the interrupt character is ^C.

Clearly, I needed to change the interrupt character. But how? Given that stty(1) nor the underlying syscall stty(2) seemed to let me change it, I looked at the code for tty(4) in /usr/sys/dev/tty.c and /usr/sys/h/tty.h. And in the header file, lo and behold:

#define CERASE '#' /* default special characters */ #define CEOT 004 #define CKILL '@' #define CQUIT 034 /* FS, cntl shift L */ #define CINTR 0177 /* DEL */ #define CSTOP 023 /* Stop output: ctl-s */ #define CSTART 021 /* Start output: ctl-q */ #define CBRK 0377

I assumed just changing these defaults would fix the defaults system-wide, which I found preferable to a solution in .profile anyway. Changed the header, one cycle of make all, make unix, cp unix /unix and a reboot later, the system exhibited the same behavior. No change to the default erase, kill or interrupt characters. I double-checked /usr/sys/dev/tty.c, and it indeed copied the characters from the header. Something, somewhere must be overwriting my new defaults.

Studying the man pages in vol. 1 of the manual, I found that on multi-user boot init calls /etc/rc, then calls getty(8), which then calls login(1), which ultimately spawns the shell. /etc/rc didn’t do anything interesting related to the console or ttys, so the culprit must be either getty(8) or login(1). As it turns out, both of them are the culprits!

getty(8) changes the erase character to #, the kill character to @ and the struct tchars to { '\177', '\034', '\021', '\023', '\004', '\377' }. At this point, I realized that:

- there’s a

struct tchars, - it can be changed from userland.

The first member of struct tchars is char t_intrc, the interrupt character. So I could’ve had a much easier solution by writing some code to change the struct tchars, if only I’d actually read the manual. I’m too far in to just settle with a .profile solution and a custom executable, though. Besides, I still couldn’t actually fix my typos at the login prompt unless I make a broader change. I’d have noticed the first point if only I’d actually read the man page for tty(4). Oops.

login(1) changes the erase character to # and the kill character to @. At least the shell leaves them alone. Seriously, three places to set these defaults is crazy.

Fixing the Characters

The plan was simple, namely, perform the following substitution:

| Function | Old Character | Old Character ASCII (Octal) |

New Character | New Character ASCII (Octal) |

|---|---|---|---|---|

| erase | # |

043 | DEL |

0177 |

| kill | @ |

0100 | ^U |

025 |

| interrupt | DEL |

0177 | ^C |

003 |

So, I changed the characters in tty(4), getty(8) and login(1). It worked! Almost. Now DEL did indeed erase. However, there was no feedback for it. When I typed DEL, the cursor would stay where it is.

Pondering the code for tty(4) again, I found that there is a variable called partab, which determines delays and what kind of special handling to apply if any. In particular, BS has an entry whose handler looks like this:

/* backspace */ case 2: if (*colp) (*colp)--; break;

Naïve as I was, I just changed the entry for DEL from “non-printing” to “backspace”, hoping that would help. Another recompilation cycle and reboot later, nothing changed. DEL still only silently erased. So I changed the handler for another character, recompiled, rebooted. Nothing changed. Again. At that point, I noticed something else must have been up.

I found out that the tty is in so-called echo mode. That means that all characters typed get echoed back to the tty. It just so happens that the representation of DEL is actually none at all. Thus it only looked like nothing changed, while the character was actually properly echoed back. However, when temporarily changing the erase character to BS (^H) and typing ^H manually, I would get the erase effect and the cursor moved back by one character on screen. When changing the erase character to something else like # and typing ^H manually, I would get no erasure, but the cursor moved back by one character on screen anyway. I now properly got the separation of character effect and representation on screen. Because of this unprintable-ness of DEL, I needed to add a special case for it in ttyoutput():

if (c==0177) {

ttyoutput(010, tp);

ttyoutput(' ', tp);

ttyoutput(010, tp);

return;

}

What this does is first send a BS to move the cursor back by one, then send a space to rub out the previous character on screen and then send another BS to get to the previous cursor position. Fortunately, my terminal lives in a world where doing this is instantaneous.

Getting the Diff

For future generations as well as myself when I inevitably majorly break this installation of V7, I wanted to make a diff. However, my V7 is installed in SIMH. I am not a very intelligent man, I didn’t keep backup copies of the files I’d changed. Getting data out of this emulated machine is an exercise in frustration.

Transmission over ethernet is out by virtue of there being no ethernet in V7. I could simulate a tape drive and write a tar file to it, but neither did I find any tools to convert from simulated tape drive to raw data, nor did I feel like writing my own. I could simulate a line printer, but neither did V7 ship with the LP11 driver (apparently by mistake), nor did I feel like copy/pasting a long lpr program in – a simple cat(1) to /dev/lp would just generate fairly garbled output. I could simulate another hard drive, but even if I format it, nothing could read the ancient file system anyway, except maybe mount_v7fs(8) on NetBSD. Though setting up NetBSD for the sole purpose of mounting another virtual machine’s hard drive sounds silly enough that I might do it in the future.

While V7 does ship with uucp(1), it requires a device to communicate through. It seems that communication over a tty is possible V7-side, but in my case, quite difficult. I use the version of SIMH as packaged on Debian because I’m a lazy person. For some reason, the DZ11 terminal emulator was removed from that package. The DUP11 bit synchronous interface, which I hope is the same as the DU-11 mentioned /usr/sys/du.c, was not part of SIMH at the time of packaging. V7 only speaks the g protocol (see Ptbl in /usr/src/cmd/uucp/cntrl.c), which requires the connection to be 8-bit clean. Even if the simulator for a DZ11 were packaged, it would most likely be unsuitable because telnet isn’t 8-bit clean by default and I’m not sure if the DZ11 driver can negotiate 8-bit clean Telnet. That aside, I’m not sure if Taylor UUCP for Linux would be able to handle “impure” TCP communications over the simulated interface, rather than a direct connection to another instance of Taylor UUCP. Then there is the issue of general compatibility between the two systems. As reader DOS pointed out, there seem to be quite some difficulties. Those difficulties were experienced on V7/x86, however. I’m not ruling out that the issues encountered are specific to V7/x86. In any case, UUCP is an adventure for another time. I expect it’ll be such a mess that it’ll deserve its own post.

In the end, I printed everything on screen using cat(1) and copied that out. Then I performed a manual diff against the original source code tree because tabs got converted to spaces in the process. Then I applied the changes to clean copies that did have the tabs. And finally, I actually invoked diff(1).

Closing Thoughts

Figuring all this out took me a few days. Penetrating how the system is put together was surprisingly fairly hard at first, but then the difficulty curve eased up. It was an interesting exercise in some kind of “reverse engineering” and I definitely learned something about tty handling. I was, however, not pleased with using ed(1), even if I do know the basics. vi(1) is a blessing that I did not appreciate enough until recently. Had I also been unable to access recursive grep(1) on my host and scroll through the code, I would’ve probably given up. Writing UNIX under those kinds of editing conditions is an amazing feat. I have nothing but the greatest respect for software developers of those days.

Here’s the diff, but V7 predates patch(1), so you’ll be stuck manually applying it: backspace.diff

† UNIX is a trademark of The Open Group.